Tag: MARK BOVE

The Flames Burn Higher

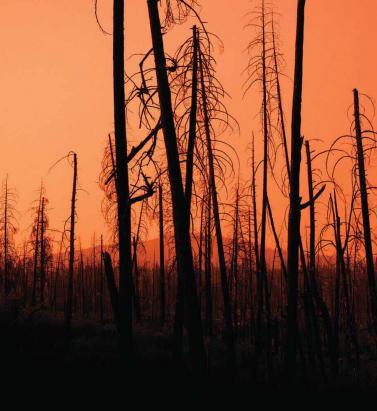

May 20, 2019With California experiencing two of the most devastating seasons on record in consecutive years, EXPOSURE asks whether wildfire now needs to be considered a peak peril Some of the statistics for the 2018 U.S. wildfire season appear normal. The season was a below-average year for the number of fires reported — 58,083 incidents represented only 84 percent of the 10-year average. The number of acres burned — 8,767,492 acres — was marginally above average at 132 percent. Two factors, however, made it exceptional. First, for the second consecutive year, the Great Basin experienced intense wildfire activity, with some 2.1 million acres burned — 233 percent of the 10-year average. And second, the fires destroyed 25,790 structures, with California accounting for over 23,600 of the structures destroyed, compared to a 10-year U.S. annual average of 2,701 residences, according to the National Interagency Fire Center. As of January 28, 2019, reported insured losses for the November 2018 California wildfires, which included the Camp and Woolsey Fires, were at US$11.4 billion, according to the California Department of Insurance. Add to this the insured losses of US$11.79 billion reported in January 2018 for the October and December 2017 California events, and these two consecutive wildfire seasons constitute the most devastating on record for the wildfire-exposed state. Reaching its Peak? Such colossal losses in consecutive years have sent shockwaves through the (re)insurance industry and are forcing a reassessment of wildfire’s secondary status in the peril hierarchy. According to Mark Bove, natural catastrophe solutions manager at Munich Reinsurance America, wildfire’s status needs to be elevated in highly exposed areas. “Wildfire should certainly be considered a peak peril in areas such as California and the Intermountain West,” he states, “but not for the nation as a whole.” His views are echoed by Chris Folkman, senior director of product management at RMS. “Wildfire can no longer be viewed purely as a secondary peril in these exposed territories,” he says. “Six of the top 10 fires for structural destruction have occurred in the last 10 years in the U.S., while seven of the top 10, and 10 of the top 20 most destructive wildfires in California history have occurred since 2015. The industry now needs to achieve a level of maturity with regard to wildfire that is on a par with that of hurricane or flood.” “Average ember contributions to structure damage and destruction is approximately 15 percent, but in a wind-driven event such as the Tubbs Fire this figure is much higher” Chris Folkman RMS However, he is wary about potential knee-jerk reactions to this hike in wildfire-related losses. “There is a strong parallel between the 2017-18 wildfire seasons and the 2004-05 hurricane seasons in terms of people’s gut instincts. 2004 saw four hurricanes make landfall in Florida, with K-R-W causing massive devastation in 2005. At the time, some pockets of the industry wondered out loud if parts of Florida were uninsurable. Yet the next decade was relatively benign in terms of hurricane activity. “The key is to adopt a balanced, long-term view,” thinks Folkman. “At RMS, we think that fire severity is here to stay, while the frequency of big events may remain volatile from year-to-year.” A Fundamental Re-evaluation The California losses are forcing (re)insurers to overhaul their approach to wildfire, both at the individual risk and portfolio management levels. “The 2017 and 2018 California wildfires have forced one of the biggest re-evaluations of a natural peril since Hurricane Andrew in 1992,” believes Bove. “For both California wildfire and Hurricane Andrew, the industry didn’t fully comprehend the potential loss severities. Catastrophe models were relatively new and had not gained market-wide adoption, and many organizations were not systematically monitoring and limiting large accumulation exposure in high-risk areas. As a result, the shocks to the industry were similar.” For decades, approaches to underwriting have focused on the wildland-urban interface (WUI), which represents the area where exposure and vegetation meet. However, exposure levels in these areas are increasing sharply. Combined with excessive amounts of burnable vegetation, extended wildfire seasons, and climate-change-driven increases in temperature and extreme weather conditions, these factors are combining to cause a significant hike in exposure potential for the (re)insurance industry. A recent report published in PNAS entitled “Rapid Growth of the U.S. Wildland-Urban Interface Raises Wildfire Risk” showed that between 1990 and 2010 the new WUI area increased by 72,973 square miles (189,000 square kilometers) — larger than Washington State. The report stated: “Even though the WUI occupies less than one-tenth of the land area of the conterminous United States, 43 percent of all new houses were built there, and 61 percent of all new WUI houses were built in areas that were already in the WUI in 1990 (and remain in the WUI in 2010).” “The WUI has formed a central component of how wildfire risk has been underwritten,” explains Folkman, “but you cannot simply adopt a black-and-white approach to risk selection based on properties within or outside of the zone. As recent losses, and in particular the 2017 Northern California wildfires, have shown, regions outside of the WUI zone considered low risk can still experience devastating losses.” For Bove, while focus on the WUI is appropriate, particularly given the Coffey Park disaster during the 2017 Tubbs Fire, there is not enough focus on the intermix areas. This is the area where properties are interspersed with vegetation. “In some ways, the wildfire risk to intermix communities is worse than that at the interface,” he explains. “In an intermix fire, you have both a wildfire and an urban conflagration impacting the town at the same time, while in interface locations the fire has largely transitioned to an urban fire. “In an intermix community,” he continues, “the terrain is often more challenging and limits firefighter access to the fire as well as evacuation routes for local residents. Also, many intermix locations are far from large urban centers, limiting the amount of firefighting resources immediately available to start combatting the blaze, and this increases the potential for a fire in high-wind conditions to become a significant threat. Most likely we’ll see more scrutiny and investigation of risk in intermix towns across the nation after the Camp Fire’s decimation of Paradise, California.” Rethinking Wildfire Analysis According to Folkman, the need for greater market maturity around wildfire will require a rethink of how the industry currently analyzes the exposure and the tools it uses. “Historically, the industry has relied primarily upon deterministic tools to quantify U.S. wildfire risk,” he says, “which relate overall frequency and severity of events to the presence of fuel and climate conditions, such as high winds, low moisture and high temperatures.” While such tools can prove valuable for addressing “typical” wildland fire events, such as the 2017 Thomas Fire in Southern California, their flaws have been exposed by other recent losses. Burning Wildfire at Sunset “Such tools insufficiently address major catastrophic events that occur beyond the WUI into areas of dense exposure,” explains Folkman, “such as the Tubbs Fire in Northern California in 2017. Further, the unprecedented severity of recent wildfire events has exposed the weaknesses in maintaining a historically based deterministic approach.” While the scale of the 2017-18 losses has focused (re)insurer attention on California, companies must also recognize the scope for potential catastrophic wildfire risk extends beyond the boundaries of the western U.S. “While the frequency and severity of large, damaging fires is lower outside California,” says Bove, “there are many areas where the risk is far from negligible.” While acknowledging that the broader western U.S. is seeing increased risk due to WUI expansion, he adds: “Many may be surprised that similar wildfire risk exists across most of the southeastern U.S., as well as sections of the northeastern U.S., like in the Pine Barrens of southern New Jersey.” As well as addressing the geographical gaps in wildfire analysis, Folkman believes the industry must also recognize the data gaps limiting their understanding. “There are a number of areas that are understated in underwriting practices currently, such as the far-ranging impacts of ember accumulations and their potential to ignite urban conflagrations, as well as vulnerability of particular structures and mitigation measures such as defensible space and fire-resistant roof coverings.” In generating its US$9 billion to US$13 billion loss estimate for the Camp and Woolsey Fires, RMS used its recently launched North America Wildfire High-Definition (HD) Models to simulate the ignition, fire spread, ember accumulations and smoke dispersion of the fires. “In assessing the contribution of embers, for example,” Folkman states, “we modeled the accumulation of embers, their wind-driven travel and their contribution to burn hazard both within and beyond the fire perimeter. Average ember contributions to structure damage and destruction is approximately 15 percent, but in a wind-driven event such as the Tubbs Fire this figure is much higher. This was a key factor in the urban conflagration in Coffey Park.” The model also provides full contiguous U.S. coverage, and includes other model innovations such as ignition and footprint simulations for 50,000 years, flexible occurrence definitions, smoke and evacuation loss across and beyond the fire perimeter, and vulnerability and mitigation measures on which RMS collaborated with the Insurance Institute for Business & Home Safety. Smoke damage, which leads to loss from evacuation orders and contents replacement, is often overlooked in risk assessments, despite composing a tangible portion of the loss, says Folkman. “These are very high-frequency, medium-sized losses and must be considered. The Woolsey Fire saw 260,000 people evacuated, incurring hotel, meal and transport-related expenses. Add to this smoke damage, which often results in high-value contents replacement, and you have a potential sea of medium-sized claims that can contribute significantly to the overall loss.” A further data resolution challenge relates to property characteristics. While primary property attribute data is typically well captured, believes Bove, many secondary characteristics key to wildfire are either not captured or not consistently captured. “This leaves the industry overly reliant on both average model weightings and risk scoring tools. For example, information about defensible spaces, roofing and siding materials, protecting vents and soffits from ember attacks, these are just a few of the additional fields that the industry will need to start capturing to better assess wildfire risk to a property.” A Highly Complex Peril Bove is, however, conscious of the simple fact that “wildfire behavior is extremely complex and non-linear.” He continues: “While visiting Paradise, I saw properties that did everything correct with regard to wildfire mitigation but still burned and risks that did everything wrong and survived. However, mitigation efforts can improve the probability that a structure survives.” “With more data on historical fires,” Folkman concludes, “more research into mitigation measures and increasing awareness of the risk, wildfire exposure can be addressed and managed. But it requires a team mentality, with all parties — (re)insurers, homeowners, communities, policymakers and land-use planners — all playing their part.”

Getting Wildfire Under Control

May 10, 2018The extreme conditions of 2017 demonstrated the need for much greater data resolution on wildfire in North America The 2017 California wildfire season was record-breaking on virtually every front. Some 1.25 million acres were torched by over 9,000 wildfire events during the period, with October to December seeing some of the most devastating fires ever recorded in the region*. From an insurance perspective, according to the California Department of Insurance, as of January 31, 2018, insurers had received almost 45,000 claims relating to losses in the region of US$11.8 billion. These losses included damage or total loss to over 30,000 homes and 4,300 businesses. On a countrywide level, the total was over 66,000 wildfires that burned some 9.8 million acres across North America, according to the National Interagency Fire Center. This compares to 2016 when there were 65,575 wildfires and 5.4 million acres burned. Caught off Guard “2017 took us by surprise,” says Tania Schoennagel, research scientist at the University of Colorado, Boulder. “Unlike conditions now [March 2018], 2017 winter and early spring were moist with decent snowpack and no significant drought recorded.” Yet despite seemingly benign conditions, it rapidly became the third-largest wildfire year since 1960, she explains. “This was primarily due to rapid warming and drying in the late spring and summer of 2017, with parts of the West witnessing some of the driest and warmest periods on record during the summer and remarkably into the late fall. “Additionally, moist conditions in early spring promoted build-up of fine fuels which burn more easily when hot and dry,” continues Schoennagel. “This combination rapidly set up conditions conducive to burning that continued longer than usual, making for a big fire year.” While Southern California has experienced major wildfire activity in recent years, until 2017 Northern California had only experienced “minor-to-moderate” events, according to Mark Bove, research meteorologist, risk accumulation, Munich Reinsurance America, Inc. “In fact, the region had not seen a major, damaging fire outbreak since the Oakland Hills firestorm in 1991, a US$1.7 billion loss at the time,” he explains. “Since then, large damaging fires have repeatedly scorched parts of Southern California, and as a result much of the industry has focused on wildfire risk in that region due to the higher frequency and due to the severity of recent events. “Although the frequency of large, damaging fires may be lower in Northern California than in the southern half of the state,” he adds, “the Wine Country fires vividly illustrated not only that extreme loss events are possible in both locales, but that loss magnitudes can be larger in Northern California. A US$11 billion wildfire loss in Napa and Sonoma counties may not have been on the radar screen for the insurance industry prior to 2017, but such losses are now.” Smoke on the Horizon Looking ahead, it seems increasingly likely that such events will grow in severity and frequency as climate-related conditions create drier, more fire-conducive environments in North America. “Since 1985, more than 50 percent of the increase in the area burned by wildfire in the forests of the Western U.S. has been attributed to anthropogenic climate change,” states Schoennagel. “Further warming is expected, in the range of 2 to 4 degrees Fahrenheit in the next few decades, which will spark ever more wildfires, perhaps beyond the ability of many Western communities to cope.” “Climate change is causing California and the American Southwest to be warmer and drier, leading to an expansion of the fire season in the region,” says Bove. “In addition, warmer temperatures increase the rate of evapotranspiration in plants and evaporation of soil moisture. This means that drought conditions return to California faster today than in the past, increasing the fire risk.” “Even though there is data on thousands of historical fires … it is of insufficient quantity and resolution to reliably determine the frequency of fires” Mark Bove Munich Reinsurance America While he believes there is still a degree of uncertainty as to whether the frequency and severity of wildfires in North America has actually changed over the past few decades, there is no doubt that exposure levels are increasing and will continue to do so. “The risk of a wildfire impacting a densely populated area has increased dramatically,” states Bove. “Most of the increase in wildfire risk comes from socioeconomic factors, like the continued development of residential communities along the wildland-urban interface and the increasing value and quantity of both real estate and personal property.” Breaches in the Data Yet while the threat of wildfire is increasing, the ability to accurately quantify that increased exposure potential is limited by a lack of granular historical data, both on a countrywide basis and even in highly exposed fire regions such as California, to accurately determine the probability of an event occurring. “Even though there is data on thousands of historical fires over the past half-century,” says Bove, “it is of insufficient quantity and resolution to reliably determine the frequency of fires at all locations across the U.S. “This is particularly true in states and regions where wildfires are less common, but still holds true in high-risk states like California,” he continues. “This lack of data, as well as the fact that the wildfire risk can be dramatically different on the opposite ends of a city, postcode or even a single street, makes it difficult to determine risk-adequate rates.” According to Max Moritz, Cooperative Extension specialist in fire at the University of California, current approaches to fire mapping and modeling are also based too much on fire-specific data. “A lot of the risk data we have comes from a bottom-up view of the fire risk itself. Methodologies are usually based on the Rothermel Fire Spread equation, which looks at spread rates, flame length, heat release, et cetera. But often we’re ignoring critical data such as wind patterns, ignition loads, vulnerability characteristics, spatial relationships, as well as longer-term climate patterns, the length of the fire season and the emergence of fire-weather corridors.” Ground-level data is also lacking, he believes. “Without very localized data you’re not factoring in things like the unique landscape characteristics of particular areas that can make them less prone to fire risk even in high-risk areas.” Further, data on mitigation measures at the individual community and property level is in short supply. “Currently, (re)insurers commonly receive data around the construction, occupancy and age of a given risk,” explains Bove, “information that is critical for the assessment of a wind or earthquake risk.” However, the information needed to properly assess wildfire risk is typically not captured. For example, whether roof covering or siding is combustible. Bove says it is important to know if soffits and vents are open-air or protected by a metal covering, for instance. “Information about a home’s upkeep and surrounding environment is critical as well,” he adds. At Ground Level While wildfire may not be as data intensive as a peril such as flood, it is almost as demanding, especially on computational capacity. It requires simulating stochastic or scenario events all the way from ignition through to spread, creating realistic footprints that can capture what the risk is and the physical mechanisms that contribute to its spread into populated environments. The RMS®North America Wildfire HD Model capitalize on this expanded computational capacity and improved data sets to bring probabilistic capabilities to bear on the peril for the first time across the entirety of the contiguous U.S. and Canada. Using a high-resolution simulation grid, the model provides a clear understanding of factors such as the vegetation levels, the density of buildings, the vulnerability of individual structures and the extent of defensible space. The model also utilizes weather data based on re-analysis of historical weather observations to create a distribution of conditions from which to simulate stochastic years. That means that for a given location, the model can generate a weather time series that includes wind speed and direction, temperature, moisture levels, et cetera. As wildfire risk is set to increase in frequency and severity due to a number of factors ranging from climate change to expansions of the wildland-urban interface caused by urban development in fire-prone areas, the industry now has to be able to live with that and understand how it alters the risk landscape. On the Wind Embers have long been recognized as a key factor in fire spread, either advancing the main burn or igniting spot fires some distance from the originating source. Yet despite this, current wildfire models do not effectively factor in ember travel, according to Max Moritz, from the University of California. “Post-fire studies show that the vast majority of buildings in the U.S. burn from the inside out due to embers entering the property through exposed vents and other entry points,” he says. “However, most of the fire spread models available today struggle to precisely recreate the fire parameters and are ineffective at modeling ember travel.” During the Tubbs Fire, the most destructive wildfire event in California’s history, embers carried on extreme ‘Diablo’ winds sparked ignitions up to two kilometers from the flame front. The rapid transport of embers not only created a more fast-moving fire, with Tubbs covering some 30 to 40 kilometers within hours of initial ignition, but also sparked devastating ignitions in areas believed to be at zero risk of fire, such as Coffey Park, Santa Rosa. This highly built-up area experienced an urban conflagration due to ember-fueled ignitions. “Embers can fly long distances and ignite fires far away from its source,” explains Markus Steuer, consultant, corporate underwriting at Munich Re. “In the case of the Tubbs Fire they jumped over a freeway and ignited the fire in Coffey Park, where more than 1,000 homes were destroyed. This spot fire was not connected to the main fire. In risk models or hazard maps this has to be considered. Firebrands can fly over natural or man-made fire breaks and damage can occur at some distance away from the densely vegetated areas.” For the first time, the RMS North America Wildfire HD Model enables the explicit simulation of ember transport and accumulation, allowing users to detail the impact of embers beyond the fire perimeters. The simulation capabilities extend beyond the traditional fuel-based fire simulations, and enable users to capture the extent to which large accumulations of firebrands and embers can be lofted beyond the perimeters of the fire itself and spark ignitions in dense residential and commercial areas. As was shown in the Tubbs Fire, areas not previously considered at threat of wildfire were exposed by the ember transport. The introduction of ember simulation capability allows the industry to quantify the complete wildfire risk appropriately across North America wildfire portfolios.