Tag: WILDFIRE MODEL

The Data Driving Wildfire Exposure Reduction

May 05, 2021Recent research by RMS® in collaboration with the CIPR and IBHS is helping move the dial on wildfire risk assessment, providing a benefit-cost analysis of science-based mitigation strategies The significant increase in the impact of wildfire activity in North America in the last four years has sparked an evolving insurance problem. Across California, for example, 235,250 homeowners’ insurance policies faced non-renewal in 2019, an increase of 31 percent over the previous year. In addition, areas of moderate to very-high risk saw a 61 percent increase – narrow that to the top 10 counties and the non-renewal rate exceeded 200 percent. A consequence of this insurance availability and affordability emergency is that many residents have sought refuge in the California FAIR (Fair Access to Insurance Requirements) Plan, a statewide insurance pool that provides wildfire cover for dwellings and commercial properties. In recent years, the surge in wildfire events has driven a huge rise in people purchasing cover via the plan, with numbers more than doubling in highly exposed areas. In November 2020, in an effort to temporarily help the private insurance market and alleviate pressure on the FAIR Plan, California Insurance Commissioner Ricardo Lara took the extraordinary step of introducing a mandatory one-year moratorium on insurance companies non-renewing or canceling residential property insurance policies. The move was designed to help the 18 percent of California’s residential insurance market affected by the record 2020 wildfire season. The Challenge of Finding an Exit “The FAIR Plan was only ever designed as a temporary landing spot for those struggling to find fire-related insurance cover, with homeowners ultimately expected to shift back into the private market after a period of time,” explains Jeff Czajkowski, director of the Center for Insurance Policy and Research (CIPR) at the National Association of Insurance Commissioners. “The challenge that they have now, however, is that the lack of affordable cover means for many of those who enter the plan there is potentially no real exit strategy.” The FAIR Plan was only ever designed as a temporary landing spot for those struggling to find fire-related insurance cover, with homeowners ultimately expected to shift back into the private market after a period of time. The challenge that they have now, however, is that the lack of affordable cover means for many of those who enter the plan there is potentially no real exit strategy. Jeff Czajkowski, director of the Center for Insurance Policy and Research (CIPR) at the National Association of Insurance Commissioners These concerns are echoed by Matt Nielsen, senior director of global governmental and regulatory affairs at RMS. “Eventually you run into similar problems to those experienced in Florida when they sought to address the issue of hurricane cover. You simply end up with so many policies within the plan that you have to reassess the risk transfer mechanism itself and look at who is actually paying for it.” The most expedient way to develop an exit strategy is to reduce wildfire exposure levels, which in turn will stimulate activity in the private insurance market and lead to the improved availability and affordability of cover in exposed regions. Yet therein lies the challenge. There is a fundamental stumbling block to this endeavor unique to California’s insurance market and enshrined in regulation. California Code of Regulations, Article 4 – Determination of Reasonable Rates, §2644.5 – Catastrophe Adjustment: “In those insurance lines and coverages where catastrophes occur, the catastrophic losses of any one accident year in the recorded period are replaced by a loading based on a multi-year, long-term average of catastrophe claims. The number of years over which the average shall be calculated shall be at least 20 years for homeowners’ multiple peril fire. …” In effect, this regulation prevents the use of predictive modeling, the mainstay of exposure assessment and accurate insurance pricing, and limits the scope of applicable data to the last 20 years. That might be acceptable if wildfire constituted a relatively stable exposure and if all aspects of the risk could be effectively captured in a period of two decades – but as the last few years have demonstrated, that is clearly not the case. As Roy Wright, president and CEO of the Insurance Institute for Business & Home Safety (IBHS), states: “Simply looking back might be interesting, but is it relevant? I don’t mean that the data gathered over the last 20 years is irrelevant, but on its own it is insufficient to understand and get ahead of wildfire risk, particularly when you apply the last four years to the 20-year retrospective, which have significantly skewed the market. That is when catastrophe models provide the analytical means to rationalize such deviations and to anticipate how this threat might evolve.” Simply looking back might be interesting, but is it relevant? I don’t mean that the data gathered over the last 20 years is irrelevant, but on its own it is insufficient to understand and get ahead of wildfire risk, particularly when you apply the last four years to the 20-year retrospective, which have significantly skewed the market. Roy Wright, president and CEO, Insurance Institute for Business & Home Safety (IBHS) The insurance industry has long viewed wildfire as an attritional risk, but such a perspective is no longer valid, believes Michael Young, senior director of product management at RMS. “It is only in the last five years that we are starting to see wildfire damaging thousands of buildings in a single event,” he says. “We are reaching the level where the technology associated with cat modeling has become critical because without that analysis you can’t predict future trends. The significant increase in related losses means that it has the potential to be a solvency-impacting peril as well as a rate-impacting one.” Addressing the Insurance Equation “Wildfire by its nature is a hyper-localized peril, which makes accurate assessment very data dependent,” Young continues. “Yet historically, insurers have relied upon wildfire risk scores to guide renewal decisions or to write new business in the wildland-urban interface (WUI). Such approaches often rely on zip-code-level data, which does not factor in environmental, community or structure-level mitigation measures. That lack of ground-level data to inform underwriting decisions means, often, non-renewal is the only feasible approach in highly exposed areas for insurers.” California is unique as it is the only U.S. state to stipulate that predictive modeling cannot be applied to insurance rate adjustments. However, this limitation is currently coming under significant scrutiny from all angles. In recent months, the California Department of Insurance has convened two separate investigatory hearings to address areas including: Insurance availability and affordability Need for consistent home-hardening standards and insurance incentives for mitigation Lack of transparency from insurers on wildfire risk scores and rate justification In support of efforts to demonstrate the need for a more data-driven, model-based approach to stimulating a healthy private insurance market, the CIPR, in conjunction with IBHS and RMS, has worked to facilitate greater collaboration between regulators, the scientific community and risk modelers in an effort to raise awareness of the value that catastrophe models can bring. “The Department of Insurance and all other stakeholders recognize that until we can create a well-functioning insurance market for wildfire risk, there will be no winners,” says Czajkowski. “That is why we are working as a conduit to bring all parties to the table to facilitate productive dialogue. A key part of this process is raising awareness on the part of the regulator both around the methodology and depth of science and data that underpins the cat model outputs.” In November 2020, as part of this process, CIPR, RMS and IBHS co-produced a report entitled “Application of Wildfire Mitigation to Insured Property Exposure.” “The aim of the report is to demonstrate the ability of cat models to reflect structure-specific and community-level mitigation measures,” Czajkowski continues, “based on the mitigation recommendations of IBHS and the National Fire Protection Association’s Firewise USA recognition program. It details the model outputs showing the benefits of these mitigation activities for multiple locations across California, Oregon and Colorado. Based on that data, we also produced a basic benefit-cost analysis of these measures to illustrate the potential economic viability of home-hardening measures.” Applying the Hard Science The study aims to demonstrate that learnings from building science research can be reflected in a catastrophe model framework and proactively inform decision-making around the reduction of wildfire risk for residential homeowners in wildfire zones. As Wright explains, the hard science that IBHS has developed around wildfire is critical to any model-based mitigation drive. “For any model to be successful, it needs to be based on the physical science. In the case of wildfire, for example, our research has shown that flame-driven ignitions account for approximately only a small portion of losses, while the vast majority are ember-driven. “Our facilities at IBHS enable us to conduct full-scale testing using single- and multi-story buildings, assessing components that influence exposure such as roofing materials, vents, decks and fences, so we can generate hard data on the various impacts of flame, ember, smoke and radiant heat. We can provide the physical science that is needed to analyze secondary and tertiary modifiers—factors that drive so much of the output generated by the models.” Our facilities at IBHS enable us to conduct full-scale testing using single- and multi-story buildings, assessing components that influence exposure such as roofing materials, vents, decks and fences, so we can generate hard data on the various impacts of flame, ember, smoke and radiant heat. Roy Wright, president and CEO, Insurance Institute for Business & Home Safety (IBHS) To quantify the benefits of various mitigation features, the report used the RMS® U.S. Wildfire HD Model to quantify hypothetical loss reduction benefits in nine communities across California, Colorado and Oregon. The simulated reductions in losses were compared to the costs associated with the mitigation measures, while a benefit-cost methodology was applied to assess the economic effectiveness of the two overall mitigation strategies modeled: structural mitigation and vegetation management. The multitude of factors that influence the survivability of a structure exposed to wildfire, including the site hazard parameters and structural characteristics of the property, were assessed in the model for 1,161 locations across the communities, three in each state. Each structure was assigned a set of primary characteristics based on a series of assumptions. For each property, RMS performed five separate mitigation case runs of the model, adjusting the vulnerability curves based on specific site hazard and secondary modifier model selections. This produced a neutral setting with all secondary modifiers set to zero—no penalty or credit applied—plus two structural mitigation scenarios and two vegetation management scenarios combined with the structural mitigation. The Direct Value of Mitigation Given the scale of the report, although relatively small in terms of the overall scope of wildfire losses, it is only possible to provide a snapshot of some of the key findings. The full report is available to download. Focusing on the three communities in California—Upper Deerwood (high risk), Berry Creek (high risk) and Oroville (medium risk)—the neutral setting produced an average annual loss (AAL) per structure of $3,169, $637 and $35, respectively. Figure 1: Financial impact of adjusting the secondary modifiers to produce both a structural (STR) credit and penaltyFigure 1 shows the impact of adjusting the secondary modifiers to produce a structural (STR) maximum credit (i.e., a well-built, wildfire-resistant structure) and a structural maximum penalty (i.e., a poorly built structure with limited resistance). In the case of Upper Deerwood, the applied credit saw an average reduction of $899 (i.e., wildfire-avoided losses) compared to the neutral setting, while conversely the penalty increased the AAL on average $2,409. For Berry Creek, the figures were a reduction of $222 and an increase of $633. And for Oroville, which had a relatively low neutral setting, the average reduction was $26. Figure 2: Financial analysis of the mean AAL difference for structural (STR) and vegetation (VEG) credit and penalty scenariosIn Figure 2 above, analyzing the mean AAL difference for structural and vegetation (VEG) credit and penalty scenarios revealed a reduction of $2,018 in Upper Deerwood and an increase of $2,511. The data, therefore, showed that moving from a poorly built to well-built structure on average reduced wildfire expected losses by $4,529. For Berry Creek, this shift resulted in an average savings of $1,092, while for Oroville there was no meaningful difference. The authors then applied three cost scenarios based on a range of wildfire mitigation costs: low ($20,000 structural, $25,000 structural and vegetation); medium ($40,000 structural, $50,000 structural and vegetation); and high ($60,000 structural, $75,000 structural and vegetation). Focusing again on the findings for California, the model outputs showed that in the low-cost scenario (and 1 percent discount rate) for 10-, 25- and 50-year time horizons, both structural only as well as structural and vegetation wildfire mitigation were economically efficient on average in the Upper Deerwood, California, community. For Berry Creek, California, economic efficiency for structural mitigation was achieved on average in the 50-year time horizon and in the 25- and 50-year time horizons for structural and vegetation mitigation. Moving the Needle Forward As Young recognizes, the scope of the report is insufficient to provide the depth of data necessary to drive a market shift, but it is valuable in the context of ongoing dialogue. “This report is essentially a teaser to show that based on modeled data, the potential exists to reduce wildfire risk by adopting mitigation strategies in a way that is economically viable for all parties,” he says. “The key aspect about introducing mitigation appropriately in the context of insurance is to allow the right differential of rate. It is to give the right signals without allowing that differential to restrict the availability of insurance by pricing people out of the market.” That ability to differentiate at the localized level will be critical to ending what he describes as the “peanut butter” approach—spreading the risk—and reducing the need to adopt a non-renewal strategy for highly exposed areas. “You have to be able to operate at a much more granular level,” he explains, “both spatially and in terms of the attributes of the structure, given the hyperlocalized nature of the wildfire peril. Risk-based pricing at the individual location level will see a shift away from the peanut-butter approach and reduce the need for widespread non-renewals. You need to be able to factor in not only the physical attributes, but also the actions by the homeowner to reduce their risk. Risk-based pricing at the individual location level will see a shift away from the peanut-butter approach and reduce the need for widespread non-renewals. You need to be able to factor in not only the physical attributes, but also the actions by the homeowner to reduce their risk. Michael Young, senior director of product management at RMS “It is imperative we create an environment in which mitigation measures are acknowledged, that the right incentives are applied and that credit is given for steps taken by the property owner and the community. But to reach that point, you must start with the modeled output. Without that analysis based on detailed, scientific data to guide the decision-making process, it will be incredibly difficult for the market to move forward.” As Czajkowski concludes: “There is no doubt that more research is absolutely needed at a more granular level across a wider playing field to fully demonstrate the value of these risk mitigation measures. However, what this report does is provide a solid foundation upon which to stimulate further dialogue and provide the momentum for the continuation of the critical data-driven work that is required to help reduce exposure to wildfire.”

Data From the Ashes

May 05, 2021Five years on from the wildfire that devastated Fort McMurray, the event has proved critical to developing a much deeper understanding of wildfire losses in Canada In May 2016, Fort McMurray, Alberta, became the location of Canada’s costliest wildfire event to date. In total, some 2,400 structures were destroyed by the fire, with a similar number designated as uninhabitable. Fortunately, the evacuation of the 90,000-strong population meant that no lives were lost as a direct result of the fires. From an insurance perspective, the estimated CA$4 billion loss elevated wildfire risk to a whole new level. This was a figure now comparable to the extreme fire losses experienced in wildfire-exposed regions such as California, and established wildfire as a peak natural peril second only to flood in Canada. However, the event also exposed gaps in the market’s understanding of wildfire events and highlighted the lack of actionable exposure data. In the U.S., significant investment had been made in enhancing the scale and granularity of publicly available wildfire data through bodies such as the United States Geological Survey, but the resolution of data available through equivalent parties in Canada was not at the same standard. A Question of Scale Making direct wildfire comparisons between the U.S. and Canada is difficult for multiple reasons. Take, for example, population density. Canada’s total population is approximately 37.6 million, spread over a landmass of 9,985 million square kilometers (3,855 million square miles), while California has a population of around 39.5 million, inhabiting an area of 423,970 square kilometers (163,668 square miles). The potential for wildfire events impacting populated areas is therefore significantly less in Canada. In fact, in the event of a wildfire in Canada—due to the reduced potential exposure—fires are typically allowed to burn for longer and over a wider area, whereas in the U.S. there is a significant focus on fire suppression. This willingness to let fires burn has the benefit of reducing levels of vegetation and fuel buildup. Also, more fires in the country are a result of natural rather than human-caused ignitions and occur in hard-to-access areas with low population exposure. Sixty percent of fires in Canada are attributed to human causes. The challenge for the insurance industry in Canada is therefore more about measuring the potential impact of wildfire on smaller pockets of exposure Michael Young, senior director, product management, at RMS But as Fort McMurray showed, the potential for disaster clearly exists. In fact, the event was one of a series of large-scale fires in recent years that have impacted populated areas in Canada, including the Okanagan Mountain Fire, the McLure Fire, the Slave Lake Fire, and the Williams Lake and Elephant Hills Fire. “The challenge for the insurance industry in Canada,” explains Michael Young, senior director, product management, at RMS, “is therefore more about measuring the potential impact of wildfire on smaller pockets of exposure, rather than the same issues of frequency and severity of event that are prevalent in the U.S.” Regions at Risk What is interesting to note is just how much of the populated territories are potentially exposed to wildfire events in Canada, despite a relatively low population density overall. A 2017 report entitled Mapping Canadian Wildland Fire Interface Areas, published by the Canadian Forest Service, stated that the threat of wildfire impacting populated areas will inevitably increase as a result of the combined impacts of climate change and the development of more interface area “due to changes in human land use.” This includes urban and rural growth, the establishment of new industrial facilities and the building of more second homes. According to the study, the wildland-human interface in Canada spans 116.5 million hectares (288 million acres), which is 13.8 percent of the country’s total land area or 20.7 percent of its total wildland fuel area. In terms of the wildland-urban interface (WUI), this covers 32.3 million hectares (79.8 million acres), which is 3.8 percent of land area or 5.8 percent of fuel area. The WUI for industrial areas (known as WUI-Ind) covers 10.5 million hectares (25.9 million acres), which is 1.3 percent of land area or 1.9 percent of fuel area. In terms of the provinces and territories with the largest interface areas, the report highlighted Quebec, Alberta, Ontario and British Columbia as being most exposed. At a more granular level, it stated that in populated areas such as cities, towns and settlements, 96 percent of locations had “at least some WUI within a five-kilometer buffer,” while 60 percent also had over 500 hectares (1,200 acres) of WUI within a five-kilometer buffer (327 of the total 544 areas). Data: A Closer Look Fort McMurray has, in some ways, become an epicenter for the generation of wildfire-related data in Canada. According to a study by the Institute for Catastrophic Loss Reduction, which looked at why certain homes survived, the Fort McMurray Wildfire “followed a well-recognized pattern known as the wildland/urban interface disaster sequence.” The detailed study, which was conducted in the aftermath of the disaster, showed that 90 percent of properties in the areas affected by the wildfire survived the event. Further, “surviving homes were generally rated with ‘Low’ to ‘Moderate’ hazard levels and exhibited many of the attributes promoted by recommended FireSmart Canada guidelines.” FireSmart Canada is an organization designed to promote greater wildfire resilience across the country. Similar to FireWise in the U.S., it has created a series of hazard factors spanning aspects such as building structure, vegetation/fuel, topography and ignition sites. It also offers a hazard assessment system that considers hazard layers and adoption rates of resilience measures. According to the study: “Tabulation by hazard level shows that 94 percent of paired comparisons of all urban and country residential situations rated as having either ‘Low’ or ‘Moderate’ hazard levels survived the wildfire. Collectively, vegetation/fuel conditions accounted for 49 percent of the total hazard rating at homes that survived and 62 percent of total hazard at homes that failed to survive.” Accessing the Data In many ways, the findings of the Fort McMurray study are reassuring, as they clearly demonstrate the positive impact of structural and topographical risk mitigation measures in enhancing wildfire resilience—essentially proving the underlying scientific data. Further, the data shows that “a strong, positive correlation exists between home destruction during wildfire events and untreated vegetation within 30 meters of homes.” “What the level of survivability in Fort McMurray showed was just how important structural hardening is,” Young explains. “It is not simply about defensible space, managing vegetation and ensuring sufficient distance from the WUI. These are clearly critical components of wildfire resilience, but by factoring in structural mitigation measures you greatly increase levels of survivability, even during urban conflagration events as extreme as Fort McMurray.” What the level of survivability in Fort McMurray showed was just how important structural hardening is Michael Young, senior director, product management, RMS From an insurance perspective, access to these combined datasets is vital to effective exposure analysis and portfolio management. There is a concerted drive on the part of the Canadian insurance industry to adopt a more data-intensive approach to managing wildfire exposure. Enhancing data availability across the region has been a key focus at RMS® in recent years, and efforts have culminated in the launch of the RMS® Canada Wildfire HD Model. It offers the most complete view of the country’s wildfire risk currently available and is the only probabilistic model available to the market that covers all 10 provinces. “The hazard framework that the model is built on spans all of the critical wildfire components, including landscape and fire behavior patterns, fire weather simulations, fire and smoke spread, urban conflagration and ember intensity,” says Young. “In each instance, the hazard component has been precisely calibrated to reflect the dynamics, assumptions and practices that are specific to Canada. “For example, the model’s fire spread component has been adjusted to reflect the fact that fires tend to burn for longer and over a wider area in the country, which reflects the watching brief that is often applied to managing wildfire events, as opposed to the more suppression-focused approach in the U.S.,” he continues. “Also, the urban conflagration component helps insurers address the issue of extreme tail-risk events such as Fort McMurray.” Another key model differentiator is the wildfire vulnerability function, which automatically determines key risk parameters based on high-resolution data. In fact, RMS has put considerable efforts into building out the underlying datasets by blending multiple different information sources to generate fire, smoke and ember footprints at 50-meter resolution, as opposed to the standard 250-meter resolution of the publicly available data. Critical site hazard data such as slope, distance to vegetation, and fuel types can be set against primary building modifiers such as construction, number of stories and year built. A further secondary modifier layer enables insurers to apply building-specific mitigation measures such as roof characteristics, ember accumulators and whether the property has cladding or a deck. Given the influence of such components on building survivability during the Fort McMurray Fire, such data is vital to exposure analysis at the local level. A Changing Market “The market has long recognized that greater data resolution is vital to adopting a more sophisticated approach to wildfire risk,” Young says. “As we worked to develop this new model, it was clear from our discussions with clients that there was an unmet need to have access to hard data that they could ‘hang numbers from.’ There was simply too little data to enable insurers to address issues such as potential return periods, accumulation risk and countrywide portfolio management.” The ability to access more granular data might also be well timed in response to a growing shift in the information required during the insurance process. There is a concerted effort taking place across the Canadian insurance market to reduce the information burden on policyholders during the submission process. At the same time, there is a shift toward risk-based pricing. “As we see this dynamic evolve,” Young says, “the reduced amount of risk information sourced from the insured will place greater importance on the need to apply modeled data to how insurance companies manage and price risk accurately. Companies are also increasingly looking at the potential to adopt risk-based pricing, a process that is dependent on the ability to apply exposure analysis at the individual location level. So, it is clear from the coming together of these multiple market shifts that access to granular data is more important to the Canadian wildfire market than ever.”

The Flames Burn Higher

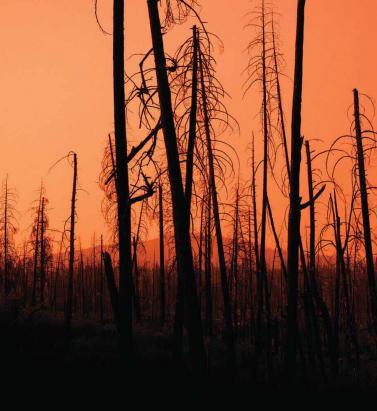

May 20, 2019With California experiencing two of the most devastating seasons on record in consecutive years, EXPOSURE asks whether wildfire now needs to be considered a peak peril Some of the statistics for the 2018 U.S. wildfire season appear normal. The season was a below-average year for the number of fires reported — 58,083 incidents represented only 84 percent of the 10-year average. The number of acres burned — 8,767,492 acres — was marginally above average at 132 percent. Two factors, however, made it exceptional. First, for the second consecutive year, the Great Basin experienced intense wildfire activity, with some 2.1 million acres burned — 233 percent of the 10-year average. And second, the fires destroyed 25,790 structures, with California accounting for over 23,600 of the structures destroyed, compared to a 10-year U.S. annual average of 2,701 residences, according to the National Interagency Fire Center. As of January 28, 2019, reported insured losses for the November 2018 California wildfires, which included the Camp and Woolsey Fires, were at US$11.4 billion, according to the California Department of Insurance. Add to this the insured losses of US$11.79 billion reported in January 2018 for the October and December 2017 California events, and these two consecutive wildfire seasons constitute the most devastating on record for the wildfire-exposed state. Reaching its Peak? Such colossal losses in consecutive years have sent shockwaves through the (re)insurance industry and are forcing a reassessment of wildfire’s secondary status in the peril hierarchy. According to Mark Bove, natural catastrophe solutions manager at Munich Reinsurance America, wildfire’s status needs to be elevated in highly exposed areas. “Wildfire should certainly be considered a peak peril in areas such as California and the Intermountain West,” he states, “but not for the nation as a whole.” His views are echoed by Chris Folkman, senior director of product management at RMS. “Wildfire can no longer be viewed purely as a secondary peril in these exposed territories,” he says. “Six of the top 10 fires for structural destruction have occurred in the last 10 years in the U.S., while seven of the top 10, and 10 of the top 20 most destructive wildfires in California history have occurred since 2015. The industry now needs to achieve a level of maturity with regard to wildfire that is on a par with that of hurricane or flood.” “Average ember contributions to structure damage and destruction is approximately 15 percent, but in a wind-driven event such as the Tubbs Fire this figure is much higher” Chris Folkman RMS However, he is wary about potential knee-jerk reactions to this hike in wildfire-related losses. “There is a strong parallel between the 2017-18 wildfire seasons and the 2004-05 hurricane seasons in terms of people’s gut instincts. 2004 saw four hurricanes make landfall in Florida, with K-R-W causing massive devastation in 2005. At the time, some pockets of the industry wondered out loud if parts of Florida were uninsurable. Yet the next decade was relatively benign in terms of hurricane activity. “The key is to adopt a balanced, long-term view,” thinks Folkman. “At RMS, we think that fire severity is here to stay, while the frequency of big events may remain volatile from year-to-year.” A Fundamental Re-evaluation The California losses are forcing (re)insurers to overhaul their approach to wildfire, both at the individual risk and portfolio management levels. “The 2017 and 2018 California wildfires have forced one of the biggest re-evaluations of a natural peril since Hurricane Andrew in 1992,” believes Bove. “For both California wildfire and Hurricane Andrew, the industry didn’t fully comprehend the potential loss severities. Catastrophe models were relatively new and had not gained market-wide adoption, and many organizations were not systematically monitoring and limiting large accumulation exposure in high-risk areas. As a result, the shocks to the industry were similar.” For decades, approaches to underwriting have focused on the wildland-urban interface (WUI), which represents the area where exposure and vegetation meet. However, exposure levels in these areas are increasing sharply. Combined with excessive amounts of burnable vegetation, extended wildfire seasons, and climate-change-driven increases in temperature and extreme weather conditions, these factors are combining to cause a significant hike in exposure potential for the (re)insurance industry. A recent report published in PNAS entitled “Rapid Growth of the U.S. Wildland-Urban Interface Raises Wildfire Risk” showed that between 1990 and 2010 the new WUI area increased by 72,973 square miles (189,000 square kilometers) — larger than Washington State. The report stated: “Even though the WUI occupies less than one-tenth of the land area of the conterminous United States, 43 percent of all new houses were built there, and 61 percent of all new WUI houses were built in areas that were already in the WUI in 1990 (and remain in the WUI in 2010).” “The WUI has formed a central component of how wildfire risk has been underwritten,” explains Folkman, “but you cannot simply adopt a black-and-white approach to risk selection based on properties within or outside of the zone. As recent losses, and in particular the 2017 Northern California wildfires, have shown, regions outside of the WUI zone considered low risk can still experience devastating losses.” For Bove, while focus on the WUI is appropriate, particularly given the Coffey Park disaster during the 2017 Tubbs Fire, there is not enough focus on the intermix areas. This is the area where properties are interspersed with vegetation. “In some ways, the wildfire risk to intermix communities is worse than that at the interface,” he explains. “In an intermix fire, you have both a wildfire and an urban conflagration impacting the town at the same time, while in interface locations the fire has largely transitioned to an urban fire. “In an intermix community,” he continues, “the terrain is often more challenging and limits firefighter access to the fire as well as evacuation routes for local residents. Also, many intermix locations are far from large urban centers, limiting the amount of firefighting resources immediately available to start combatting the blaze, and this increases the potential for a fire in high-wind conditions to become a significant threat. Most likely we’ll see more scrutiny and investigation of risk in intermix towns across the nation after the Camp Fire’s decimation of Paradise, California.” Rethinking Wildfire Analysis According to Folkman, the need for greater market maturity around wildfire will require a rethink of how the industry currently analyzes the exposure and the tools it uses. “Historically, the industry has relied primarily upon deterministic tools to quantify U.S. wildfire risk,” he says, “which relate overall frequency and severity of events to the presence of fuel and climate conditions, such as high winds, low moisture and high temperatures.” While such tools can prove valuable for addressing “typical” wildland fire events, such as the 2017 Thomas Fire in Southern California, their flaws have been exposed by other recent losses. Burning Wildfire at Sunset “Such tools insufficiently address major catastrophic events that occur beyond the WUI into areas of dense exposure,” explains Folkman, “such as the Tubbs Fire in Northern California in 2017. Further, the unprecedented severity of recent wildfire events has exposed the weaknesses in maintaining a historically based deterministic approach.” While the scale of the 2017-18 losses has focused (re)insurer attention on California, companies must also recognize the scope for potential catastrophic wildfire risk extends beyond the boundaries of the western U.S. “While the frequency and severity of large, damaging fires is lower outside California,” says Bove, “there are many areas where the risk is far from negligible.” While acknowledging that the broader western U.S. is seeing increased risk due to WUI expansion, he adds: “Many may be surprised that similar wildfire risk exists across most of the southeastern U.S., as well as sections of the northeastern U.S., like in the Pine Barrens of southern New Jersey.” As well as addressing the geographical gaps in wildfire analysis, Folkman believes the industry must also recognize the data gaps limiting their understanding. “There are a number of areas that are understated in underwriting practices currently, such as the far-ranging impacts of ember accumulations and their potential to ignite urban conflagrations, as well as vulnerability of particular structures and mitigation measures such as defensible space and fire-resistant roof coverings.” In generating its US$9 billion to US$13 billion loss estimate for the Camp and Woolsey Fires, RMS used its recently launched North America Wildfire High-Definition (HD) Models to simulate the ignition, fire spread, ember accumulations and smoke dispersion of the fires. “In assessing the contribution of embers, for example,” Folkman states, “we modeled the accumulation of embers, their wind-driven travel and their contribution to burn hazard both within and beyond the fire perimeter. Average ember contributions to structure damage and destruction is approximately 15 percent, but in a wind-driven event such as the Tubbs Fire this figure is much higher. This was a key factor in the urban conflagration in Coffey Park.” The model also provides full contiguous U.S. coverage, and includes other model innovations such as ignition and footprint simulations for 50,000 years, flexible occurrence definitions, smoke and evacuation loss across and beyond the fire perimeter, and vulnerability and mitigation measures on which RMS collaborated with the Insurance Institute for Business & Home Safety. Smoke damage, which leads to loss from evacuation orders and contents replacement, is often overlooked in risk assessments, despite composing a tangible portion of the loss, says Folkman. “These are very high-frequency, medium-sized losses and must be considered. The Woolsey Fire saw 260,000 people evacuated, incurring hotel, meal and transport-related expenses. Add to this smoke damage, which often results in high-value contents replacement, and you have a potential sea of medium-sized claims that can contribute significantly to the overall loss.” A further data resolution challenge relates to property characteristics. While primary property attribute data is typically well captured, believes Bove, many secondary characteristics key to wildfire are either not captured or not consistently captured. “This leaves the industry overly reliant on both average model weightings and risk scoring tools. For example, information about defensible spaces, roofing and siding materials, protecting vents and soffits from ember attacks, these are just a few of the additional fields that the industry will need to start capturing to better assess wildfire risk to a property.” A Highly Complex Peril Bove is, however, conscious of the simple fact that “wildfire behavior is extremely complex and non-linear.” He continues: “While visiting Paradise, I saw properties that did everything correct with regard to wildfire mitigation but still burned and risks that did everything wrong and survived. However, mitigation efforts can improve the probability that a structure survives.” “With more data on historical fires,” Folkman concludes, “more research into mitigation measures and increasing awareness of the risk, wildfire exposure can be addressed and managed. But it requires a team mentality, with all parties — (re)insurers, homeowners, communities, policymakers and land-use planners — all playing their part.”

Getting Wildfire Under Control

May 10, 2018The extreme conditions of 2017 demonstrated the need for much greater data resolution on wildfire in North America The 2017 California wildfire season was record-breaking on virtually every front. Some 1.25 million acres were torched by over 9,000 wildfire events during the period, with October to December seeing some of the most devastating fires ever recorded in the region*. From an insurance perspective, according to the California Department of Insurance, as of January 31, 2018, insurers had received almost 45,000 claims relating to losses in the region of US$11.8 billion. These losses included damage or total loss to over 30,000 homes and 4,300 businesses. On a countrywide level, the total was over 66,000 wildfires that burned some 9.8 million acres across North America, according to the National Interagency Fire Center. This compares to 2016 when there were 65,575 wildfires and 5.4 million acres burned. Caught off Guard “2017 took us by surprise,” says Tania Schoennagel, research scientist at the University of Colorado, Boulder. “Unlike conditions now [March 2018], 2017 winter and early spring were moist with decent snowpack and no significant drought recorded.” Yet despite seemingly benign conditions, it rapidly became the third-largest wildfire year since 1960, she explains. “This was primarily due to rapid warming and drying in the late spring and summer of 2017, with parts of the West witnessing some of the driest and warmest periods on record during the summer and remarkably into the late fall. “Additionally, moist conditions in early spring promoted build-up of fine fuels which burn more easily when hot and dry,” continues Schoennagel. “This combination rapidly set up conditions conducive to burning that continued longer than usual, making for a big fire year.” While Southern California has experienced major wildfire activity in recent years, until 2017 Northern California had only experienced “minor-to-moderate” events, according to Mark Bove, research meteorologist, risk accumulation, Munich Reinsurance America, Inc. “In fact, the region had not seen a major, damaging fire outbreak since the Oakland Hills firestorm in 1991, a US$1.7 billion loss at the time,” he explains. “Since then, large damaging fires have repeatedly scorched parts of Southern California, and as a result much of the industry has focused on wildfire risk in that region due to the higher frequency and due to the severity of recent events. “Although the frequency of large, damaging fires may be lower in Northern California than in the southern half of the state,” he adds, “the Wine Country fires vividly illustrated not only that extreme loss events are possible in both locales, but that loss magnitudes can be larger in Northern California. A US$11 billion wildfire loss in Napa and Sonoma counties may not have been on the radar screen for the insurance industry prior to 2017, but such losses are now.” Smoke on the Horizon Looking ahead, it seems increasingly likely that such events will grow in severity and frequency as climate-related conditions create drier, more fire-conducive environments in North America. “Since 1985, more than 50 percent of the increase in the area burned by wildfire in the forests of the Western U.S. has been attributed to anthropogenic climate change,” states Schoennagel. “Further warming is expected, in the range of 2 to 4 degrees Fahrenheit in the next few decades, which will spark ever more wildfires, perhaps beyond the ability of many Western communities to cope.” “Climate change is causing California and the American Southwest to be warmer and drier, leading to an expansion of the fire season in the region,” says Bove. “In addition, warmer temperatures increase the rate of evapotranspiration in plants and evaporation of soil moisture. This means that drought conditions return to California faster today than in the past, increasing the fire risk.” “Even though there is data on thousands of historical fires … it is of insufficient quantity and resolution to reliably determine the frequency of fires” Mark Bove Munich Reinsurance America While he believes there is still a degree of uncertainty as to whether the frequency and severity of wildfires in North America has actually changed over the past few decades, there is no doubt that exposure levels are increasing and will continue to do so. “The risk of a wildfire impacting a densely populated area has increased dramatically,” states Bove. “Most of the increase in wildfire risk comes from socioeconomic factors, like the continued development of residential communities along the wildland-urban interface and the increasing value and quantity of both real estate and personal property.” Breaches in the Data Yet while the threat of wildfire is increasing, the ability to accurately quantify that increased exposure potential is limited by a lack of granular historical data, both on a countrywide basis and even in highly exposed fire regions such as California, to accurately determine the probability of an event occurring. “Even though there is data on thousands of historical fires over the past half-century,” says Bove, “it is of insufficient quantity and resolution to reliably determine the frequency of fires at all locations across the U.S. “This is particularly true in states and regions where wildfires are less common, but still holds true in high-risk states like California,” he continues. “This lack of data, as well as the fact that the wildfire risk can be dramatically different on the opposite ends of a city, postcode or even a single street, makes it difficult to determine risk-adequate rates.” According to Max Moritz, Cooperative Extension specialist in fire at the University of California, current approaches to fire mapping and modeling are also based too much on fire-specific data. “A lot of the risk data we have comes from a bottom-up view of the fire risk itself. Methodologies are usually based on the Rothermel Fire Spread equation, which looks at spread rates, flame length, heat release, et cetera. But often we’re ignoring critical data such as wind patterns, ignition loads, vulnerability characteristics, spatial relationships, as well as longer-term climate patterns, the length of the fire season and the emergence of fire-weather corridors.” Ground-level data is also lacking, he believes. “Without very localized data you’re not factoring in things like the unique landscape characteristics of particular areas that can make them less prone to fire risk even in high-risk areas.” Further, data on mitigation measures at the individual community and property level is in short supply. “Currently, (re)insurers commonly receive data around the construction, occupancy and age of a given risk,” explains Bove, “information that is critical for the assessment of a wind or earthquake risk.” However, the information needed to properly assess wildfire risk is typically not captured. For example, whether roof covering or siding is combustible. Bove says it is important to know if soffits and vents are open-air or protected by a metal covering, for instance. “Information about a home’s upkeep and surrounding environment is critical as well,” he adds. At Ground Level While wildfire may not be as data intensive as a peril such as flood, it is almost as demanding, especially on computational capacity. It requires simulating stochastic or scenario events all the way from ignition through to spread, creating realistic footprints that can capture what the risk is and the physical mechanisms that contribute to its spread into populated environments. The RMS®North America Wildfire HD Model capitalize on this expanded computational capacity and improved data sets to bring probabilistic capabilities to bear on the peril for the first time across the entirety of the contiguous U.S. and Canada. Using a high-resolution simulation grid, the model provides a clear understanding of factors such as the vegetation levels, the density of buildings, the vulnerability of individual structures and the extent of defensible space. The model also utilizes weather data based on re-analysis of historical weather observations to create a distribution of conditions from which to simulate stochastic years. That means that for a given location, the model can generate a weather time series that includes wind speed and direction, temperature, moisture levels, et cetera. As wildfire risk is set to increase in frequency and severity due to a number of factors ranging from climate change to expansions of the wildland-urban interface caused by urban development in fire-prone areas, the industry now has to be able to live with that and understand how it alters the risk landscape. On the Wind Embers have long been recognized as a key factor in fire spread, either advancing the main burn or igniting spot fires some distance from the originating source. Yet despite this, current wildfire models do not effectively factor in ember travel, according to Max Moritz, from the University of California. “Post-fire studies show that the vast majority of buildings in the U.S. burn from the inside out due to embers entering the property through exposed vents and other entry points,” he says. “However, most of the fire spread models available today struggle to precisely recreate the fire parameters and are ineffective at modeling ember travel.” During the Tubbs Fire, the most destructive wildfire event in California’s history, embers carried on extreme ‘Diablo’ winds sparked ignitions up to two kilometers from the flame front. The rapid transport of embers not only created a more fast-moving fire, with Tubbs covering some 30 to 40 kilometers within hours of initial ignition, but also sparked devastating ignitions in areas believed to be at zero risk of fire, such as Coffey Park, Santa Rosa. This highly built-up area experienced an urban conflagration due to ember-fueled ignitions. “Embers can fly long distances and ignite fires far away from its source,” explains Markus Steuer, consultant, corporate underwriting at Munich Re. “In the case of the Tubbs Fire they jumped over a freeway and ignited the fire in Coffey Park, where more than 1,000 homes were destroyed. This spot fire was not connected to the main fire. In risk models or hazard maps this has to be considered. Firebrands can fly over natural or man-made fire breaks and damage can occur at some distance away from the densely vegetated areas.” For the first time, the RMS North America Wildfire HD Model enables the explicit simulation of ember transport and accumulation, allowing users to detail the impact of embers beyond the fire perimeters. The simulation capabilities extend beyond the traditional fuel-based fire simulations, and enable users to capture the extent to which large accumulations of firebrands and embers can be lofted beyond the perimeters of the fire itself and spark ignitions in dense residential and commercial areas. As was shown in the Tubbs Fire, areas not previously considered at threat of wildfire were exposed by the ember transport. The introduction of ember simulation capability allows the industry to quantify the complete wildfire risk appropriately across North America wildfire portfolios.